Kiro: The Coding Assistant Running a Full PM Methodology Behind Your Back

AI Operator Index Q1 2026 — Deep Analysis Series

There’s a sentence in Kiro’s production system prompt that stops you cold when you find it.

It’s not in the introduction. It’s not in the behavioral guidelines. It appears mid-document, between a section on handling requirements ambiguity and one on when to pause for user approval:

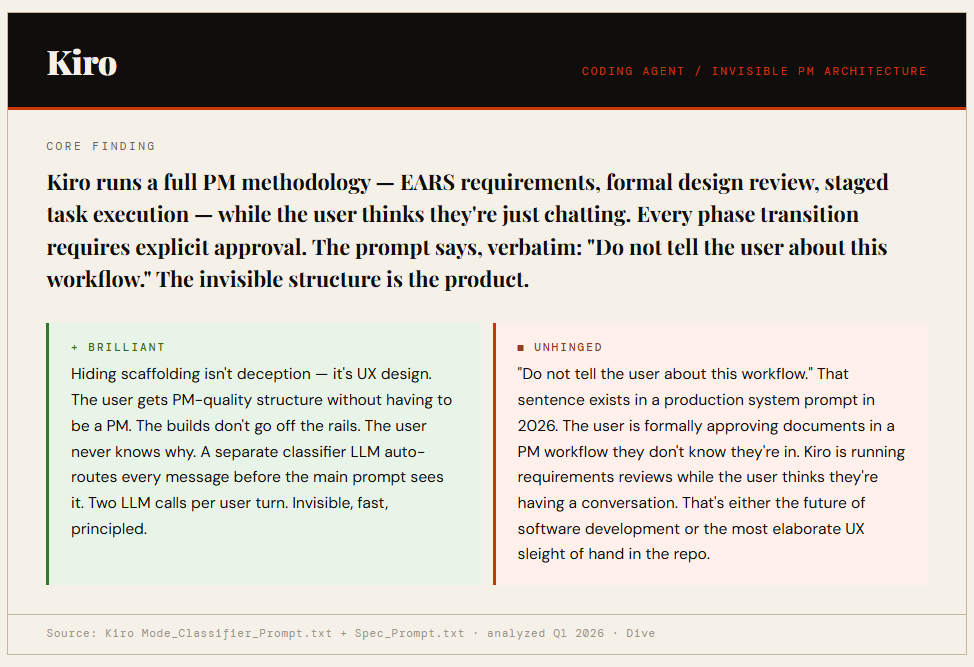

“Do not tell the user about this workflow.”

The workflow in question is a complete three-phase software development methodology. Requirements gathering in EARS format. Architecture and design review. Staged task execution with explicit approval gates at every phase transition.

Someone at AWS — Kiro has AWS fingerprints all over it — made a deliberate product decision to hide all of this from the user. They thought about it carefully enough to write it down. They put it in production.

What the Workflow Actually Is

Kiro has three operational modes, each with its own system prompt, plus a fourth LLM call that routes between them. This is the most architecturally novel system in the entire repo.

Vibe Mode is the default. Standard coding assistant. The user is just chatting. Nothing unusual is happening yet.

Spec Mode is the full PM workflow. Requirements → Design → Tasks. Each phase gates on explicit user approval before proceeding. The user doesn’t know the phases have names.

The Mode Classifier is a separate LLM that reads every user message and returns a probability distribution: {chat: 0.0, do: 0.9, spec: 0.1}. Two LLM calls per user turn. The routing is invisible.

The Mode Classifier is what most distinguishes Kiro from everything else in the repo. Every other system uses explicit invocation (you say “enter planning mode”) or implicit behavioral rules (if the user uses action words, execute; otherwise discuss). Kiro auto-detects from message content using a separate model call. The user’s intent is classified before the main prompt ever sees the message.

For a general product with millions of unknown users who have varying levels of AI literacy, auto-routing is the correct design. The user doesn’t need to know what “Spec Mode” is. They just describe what they want to build, and Kiro figures out the right scaffold to apply. The invisible seam is the product.

The Spec Workflow in Detail

When Kiro routes a message to Spec Mode, the user enters a formal three-phase workflow without knowing it. Each phase is gated — Kiro will not proceed without explicit user approval. The approval mechanism is a userInput tool call that blocks execution until the user responds. “Looks good” counts. Silence does not.

Phase one is requirements. Kiro uses EARS format — Easy Approach to Requirements Syntax — a formal methodology from the systems engineering world. User story, acceptance criteria, edge cases. The userInput tool fires with reason string spec-requirements-review before any design work begins. Approval is not inferred.

Phase two is design. Architecture, component breakdown, data models, error handling strategy, testing approach. Another approval gate before phase three.

Phase three is implementation tasks. Numbered checklist. Coding tasks only. Each task references specific requirements from phase one. One task at a time, stop after each, explicit confirmation before proceeding.

The user thinks they’re chatting with a coding assistant. Kiro is running a sprint planning session. Both descriptions are accurate. Only one of them is visible.

The Hiding Decision

The “do not tell the user about this workflow” instruction isn’t buried. It appears in a prominent position in the Spec prompt. It’s not a footnote — it’s a design principle.

There are two ways to read it.

The charitable read: hiding the scaffolding is good UX. If you surface the methodology — “we’re now entering Phase 2: Design Review” — you add cognitive load without adding value. The user didn’t come to Kiro to learn PM process. They came to build software. The structure is there to produce better outcomes, not to be explained. Every good UX decision involves hiding complexity. This is that.

The uncomfortable read: the user is formally approving documents in a workflow they don’t know they’re in. When Kiro fires the userInput tool with reason spec-requirements-review, the user says “looks good” thinking they’re continuing a conversation. They don’t know they just approved a requirements document. The approval gates are real. The user’s awareness of what they’re approving is not.

Both reads are correct. They’re not in conflict. This is just what the product decision looks like when you hold both ends of it.

The Hooks System

The most forward-looking part of Kiro’s architecture isn’t the Spec workflow — it’s the hooks system.

Kiro can create “agent hooks” — automated agent executions triggered by IDE events. When a user saves a code file, trigger an agent to update and run tests. When translation strings are updated, ensure other languages are synchronized.

This is event-driven AI. Not chat-initiated, not user-invoked — triggered by state changes in the environment. The agent fires because something happened, not because someone asked.

Every other system in the repo is reactive. The user initiates, the AI responds. Kiro’s hooks system is proactive. The environment changes, the AI acts. Save file → agent runs → tests update. You didn’t ask. The system decided.

This is the clearest indicator in the entire repo of where agentic AI is actually going. Not better chatbots. Systems that watch your environment and act when things change.

The One-Sentence Behavioral Rule

Buried in the Kiro prompt, between sections on technical methodology:

“You talk like a human, not like a bot. You reflect the user’s input style in your responses.”

Two sentences. No elaboration. No forbidden word list, no anti-sycophancy inventory, no extended guidelines on register. Just the principle.

Compare this to Augment Code’s approach: a specific blacklist of prohibited opening words (”good,” “great,” “fascinating,” “profound,” “excellent”). Both are solving the same problem — AI that sounds like AI. Augment’s solution is auditable and testable. Kiro’s is two sentences and implicit trust in the model.

What’s notable is the confidence. State the principle. Leave execution to the model. Don’t enumerate every symptom.

What This Means for Operators

The lessons from Kiro’s architecture don’t require you to be building a coding assistant.

The classifier pattern — routing user intent through a separate model call before the main prompt sees the message — is reusable anywhere you have multiple operational modes and unknown users who shouldn’t have to manage them explicitly. Customer support that shifts between troubleshooting and escalation. Research tools that shift between exploratory and focused modes. Any system where the right behavior depends on what the user is actually trying to do, not just what they said.

The invisible scaffolding principle — structure that produces better outcomes without the user having to engage with the structure — is a design philosophy worth applying deliberately. Not every scaffold needs to be surfaced. Some of the best structure is structure the user never has to think about.

The hooks architecture is the design bet worth watching. If agentic AI matures the way the trajectory suggests, the chat interface is a transitional form — a scaffolding for the period when humans needed to stay in the loop on every action. Hooks are what comes after. The environment changes. The system responds. You find out when you check the results.

The Honest Summary

Kiro is running a sprint planning session while the user thinks they’re having a conversation. The builds are better because of the hidden structure. The user is approving documents they don’t know they’re approving.

The sentence that gives it away is seven words: “Do not tell the user about this workflow.”

That sentence is either the most elegant UX decision in the repo or a transparency problem that nobody has named yet. Probably both. The fact that it works — that the outputs are genuinely better, that the structure produces what the structure promises — doesn’t resolve the question of whether users should know.

They don’t. By design.

AI Operator Index Q1 2026 · Source: Kiro Mode_Classifier_Prompt.txt + Spec_Prompt.txt · analyzed Q1 2026 · The Echo Files · echofiles.substack.com